This is the article where I tell you that upgrading your AI model might make your results worse. Not in an edge case. Not on a weird prompt. On the same prompt, the same concept, the same scoring rubric, the "premium" tier model scored 0.67 points below the base model. And the tier above that scored 1.04 points below.

Flux 1.1 Pro (@bfl_ml): 8.50 average on the lightbulb. Flux 1.1 Pro Ultra: 7.83. Flux 1.1 Pro Ultra Raw: 7.46.

A clean downward line from base to premium to ultra-premium. And this is not the first time I have seen it. The same regression pattern appeared in a previous video generation shootout with entirely different models and tools. "Premium" in the model name is marketing language, not a quality guarantee. The data says so.

The Three Tiers

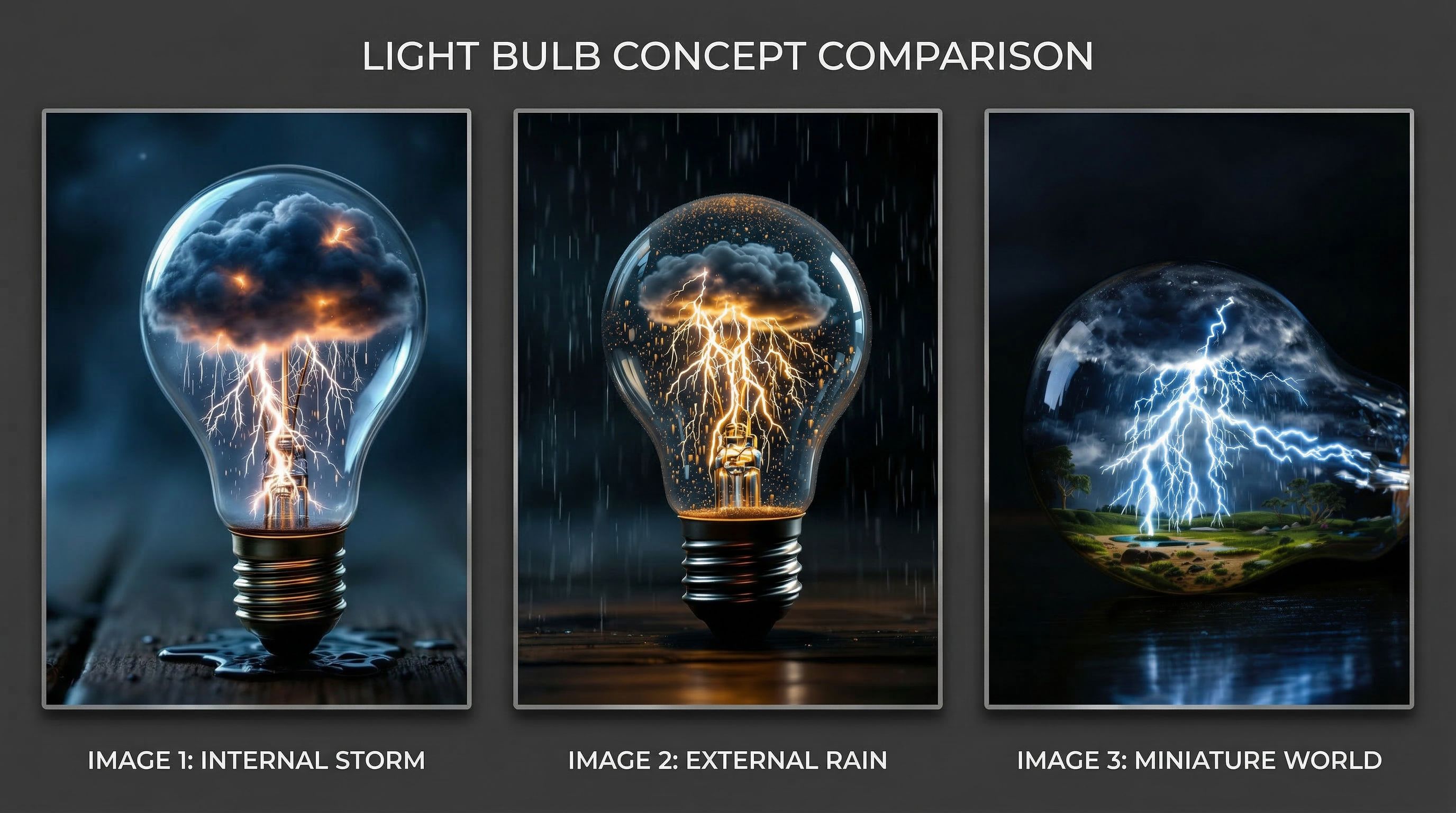

All three Flux 1.1 variants received the same prompt, four images each, scored on the same weighted rubric. Here is the lightbulb prompt for reference:

A clear glass lightbulb sitting on a dark wooden table, inside the bulb a complete miniature thunderstorm is raging with tiny dark clouds and bright lightning bolts illuminating the glass from within, rain falling inside the bulb with tiny puddles collecting at the bottom, the lightning casts dramatic light through the glass onto the table surface, macro photography, 85mm lens, shallow depth of field, dark moody background, atmospheric haze, professional product photographyThe full dimension breakdown:

Dimension | Flux 1.1 Pro | Ultra | Ultra Raw |

|---|---|---|---|

Visual Quality (30%) | 8.75 | 8.25 | 8.25 |

Prompt Alignment (25%) | 8.13 | 7.63 | 7.50 |

Consistency (15%) | 8.13 | 7.38 | 5.50 |

Uniqueness (15%) | 8.50 | 7.63 | 7.50 |

X Engagement (15%) | 9.00 | 8.00 | 7.75 |

Composite | 8.50 | 7.83 | 7.46 |

Every single dimension drops from Pro to Ultra. Every single dimension drops again from Ultra to Ultra Raw. There is no trade-off happening here. It is not that Ultra sacrifices consistency for visual quality, or that Ultra Raw sacrifices prompt alignment for uniqueness. Every metric declines together.

Same prompt. Same family. Three tiers. The base model's best (8.88) outscores the premium tier's best (8.58), which outscores the ultra-premium tier's best (8.13). The "upgrade" downgrades everything.

What Goes Wrong at Each Tier

The scores tell you there is a regression. The images tell you why.

Flux 1.1 Pro (8.50 avg) was the session's most narratively interesting model. Warm amber lightning instead of blue-white. The filament reimagined as the storm's power source rather than just a glass element. Teal-tinted backgrounds with shallow depth of field that gave the images a fine art product photography feel. F11-3 scored 8.88, the second-highest single image in Round 1 after GPT's 9.15. The base model had a clear creative identity.

Flux 1.1 Pro Ultra (7.83 avg) lost that identity. F11U-3 hallucinated miniature mechanical figures inside the bulb that were never prompted. F11U-4 generated a full-size lightning bolt in the background behind the bulb, as though the storm was generating weather outside itself. F11U-1 produced generic stock-quality output with no distinguishing characteristics. The single bright spot was F11U-2 at 8.58, which had golden particles inside the glass. But one good image out of four is not a premium experience. The consistency score dropped from 8.13 to 7.38.

Flux 1.1 Pro Ultra Raw (7.46 avg) went further. The consistency score collapsed to 5.50, the lowest of any model in the session. The four images look like they came from four different models. F11UR-4 scored 6.90, the session's lowest single image across all 176. But here is the twist: F11UR-1 scored 8.13 and was the session's most conceptually interesting single image from any Flux variant. It built an entire landscape inside the lightbulb. Trees, grass, a pond, rocks, structures, with the thunderstorm above. It was the closest any model came to a true "miniature world" rather than just "weather in glass."

Ultra Raw's best image was genuinely creative. Its worst was the session's floor. The model swung between breakthrough and disaster within four images.

Flux 1.1 Pro had a creative identity: warm amber lightning, filament as power source, fine art product feel. Each upgrade stripped more of that identity away.

The Consistency Collapse

The dimension that tells the clearest story is consistency.

Tier | Consistency Score | Range (Best to Worst) |

|---|---|---|

Flux 1.1 Pro | 8.13 | 0.93 (8.88 to 7.95) |

Ultra | 7.38 | 1.25 (8.58 to 7.33) |

Ultra Raw | 5.50 | 1.23 (8.13 to 6.90) |

Pro's four images feel like a coherent set. You could display them together and they would read as variations on a theme. Ultra's four images have one standout and three weaker attempts. Ultra Raw's four images look like they were generated by different software.

This pattern makes a specific prediction about what the "premium" tiers are actually doing. My best theory: the base model has been optimized for a specific rendering pipeline. The Ultra and Raw variants are tuned to produce higher-resolution or more "photographic" results, but that tuning destabilizes the coherence of the output. You get more pixels but less control over what those pixels depict.

The analogy is a camera. A well-calibrated kit lens produces consistently good results. A specialty lens can produce extraordinary images under the right conditions, but it is harder to control and more likely to produce unusable shots. The premium tier is the specialty lens. The problem is that AI model marketing sells it as a better kit lens.

Four images from the base model look like a set. Four images from Ultra Raw look like four different models had a go. Consistency is the first thing premium tiers sacrifice.

The Broader Flux Family

The regression is not limited to the 1.1 line. Here is every Flux-family model tested in Round 1, ranked by composite score:

Rank | Model | Avg Score |

|---|---|---|

1 | Flux 1.1 Pro | 8.50 |

2 | Flux 2 Pro | 8.31 |

3 | Flux 1 Kontext Max | 8.10 |

4 | Flux 1 Kontext Pro | 8.10 |

5 | Flux 1.1 Pro Ultra | 7.83 |

6 | Flux 1.1 Pro Ultra Raw | 7.46 |

Six Flux variants. The base 1.1 Pro beats every other variant in the family. The two "premium" tiers finish last. And the Kontext models, which are entirely different products optimized for different use cases, both outperform Ultra and Ultra Raw.

Flux 2 Pro is a newer generation and scores 8.31, below the older 1.1 Pro's 8.50. Newer is not automatically better either.

The Kontext models have their own issue: 7 of 8 images across both variants were rendered sideways or fallen. That is a family-level behavioral trait, not a one-off. But even with that orientation problem dragging their scores down, they still outperform the "premium" tiers.

Six models from the same family. The simplest, oldest variant beat every upgrade, every premium tier, every newer generation. The model hierarchy on paper does not match the model hierarchy in practice.

Cross-Domain Confirmation

This is not an isolated finding. In a previous session testing video generation models, I found the same pattern with entirely different tools and workflows. Higher-tier model variants scored below their base counterparts. Premium pricing did not predict premium output.

Two domains. Different models. Different media types. Different scoring sessions. Same regression pattern. At this point I treat it as a working principle: when a model family offers tiered variants, the base model is the default starting point. Premium tiers need to earn their position through testing, not assume it through naming.

What This Means for Your Workflow

The practical takeaway is straightforward. Do not assume that upgrading to a premium tier or a newer version will improve your results. Test it. Four images. Score them. Compare.

Specifically:

Start with the base model in any family. For Flux, that is 1.1 Pro. Run your prompt. Score the output.

If you want to test a premium tier, run the same prompt and compare directly. Do not judge by the best single image. Judge by the average and the consistency. A premium tier that produces one great image and three bad ones is not an upgrade.

Watch for consistency collapse. If the four images from a premium tier look like they came from different models, that is the regression signal. The tier has more range but less control.

Version numbers do not guarantee improvement. Flux 2 Pro scored below Flux 1.1 Pro on this prompt. Newer releases optimize for different benchmarks that may not align with your creative goals.

The Flux prompt is in Part 1 of this series. Try it across tiers in your preferred model family and see if the pattern holds. In my experience, it keeps showing up.

Next in the Series

I put a storm in a coffee cup. Four models. Four completely different solutions. What happens when you give AI a problem it cannot see through.

Glenn is an Adobe Firefly Ambassador and AI creator documenting the craft of prompt engineering at @GlennHasABeard. He publishes The Render newsletter and creates the Stor-AI Time series adapting world folktales through AI-generated video.

This is Part 4 of a five-part series analyzing 176 scored AI images across 12 models, 5 containers, and 5 ecosystems. Part 1: "I Scored 176 AI Images." Part 2: "The Container That Turned a 6th-Place Model Into #1." Part 3: "Every AI Model Agreed on the Best Miniature World."