In yesterday's article I ranked 12 AI models on a thunderstorm in a lightbulb. Nano Banana Pro (@NanoBanana) placed 6th with an 8.26 average. Respectable. Forgettable. Nowhere near the top.

Then I changed the container to a pocket watch. Same storm. Same prompt structure. Same scoring rubric. NB Pro's average jumped to 8.89 and its best single image hit 9.23, the highest individual score in the entire 176-image session. Not GPT Image 1.5 (@ChatGPTapp). Not Firefly Image 5 (@AdobeFirefly). The model that placed 6th produced the session's best single image.

A 0.97-point swing. Same model. Different container.

This article is the story of why that happened and what it reveals about how AI models actually interpret prompts. If you have been choosing containers based on what looks cool, the data suggests a different question: what does your model do well, and which container rewards that behavior?

The Lightbulb Problem

NB Pro's lightbulb performance tells a story if you read beyond the average. Its four scores were 8.58, 8.90, 8.18, and 7.38. That 7.38 is the lowest individual score from any model in the top 8. But the 8.90 was the second-highest single image in Round 1, beaten only by GPT's 9.15.

The consistency score was 7.63. For context, Firefly scored 8.88 on consistency and Imagen 4 (@GoogleAIStudio) scored 9.00. NB Pro was wildly inconsistent, swinging between near-session-best and near-session-worst within the same four images.

Here is what made NB Pro different from every other model on the lightbulb: it refused to stay inside the glass. Every image had lightning escaping onto the table. Warm golden caustic light radiating through the glass. Lichtenberg-figure branching patterns on the wood surface. Condensation on the exterior. And every image placed the bulb in a room, not on a studio surface. Blurred shelves, workshop backgrounds, pendant lights in the bokeh.

The model with the lowest Prompt Alignment (7.38 average across that dimension) had the highest X Engagement Potential (8.88). It ignored instructions and gave you what it thought looked better.

NB Pro's best lightbulb. 8.90. Lightning crawling across the table, warm caustics through glass, workshop background. The model that follows prompts least produces the most shareable images.

That tension between accuracy and artistry is exactly what makes NB Pro interesting. But the lightbulb constrained its strongest behavior. A lightbulb is a familiar object. "Weather inside a lightbulb" has stock photography precedent. The training data has opinions about what a lightbulb looks like, and those opinions fight against NB Pro's instinct to break containment and build an environment.

The pocket watch removed that fight.

What the Pocket Watch Changed

An open antique pocket watch has no stock photography precedent for "weather inside a timepiece." There is no template in the training data for what this should look like. Every model had to reason from scratch.

Here is the prompt:

An open antique pocket watch sitting on a dark wooden table, inside the watch face a complete miniature thunderstorm is raging with tiny dark clouds and bright lightning bolts illuminating the watch glass from within, rain falling inside the watch with tiny puddles collecting on the watch mechanism, the lightning casts dramatic light through the watch crystal onto the table surface, macro photography, 85mm lens, shallow depth of field, dark moody background, atmospheric haze, professional product photographyNB Pro's four pocket watch images scored 9.23, 8.78, 8.78, and 8.78. Average: 8.89. The consistency score that was 7.63 on the lightbulb jumped dramatically. Three of four images scored identically. The model stabilized.

But the real story is NBP-PW-1 at 9.23.

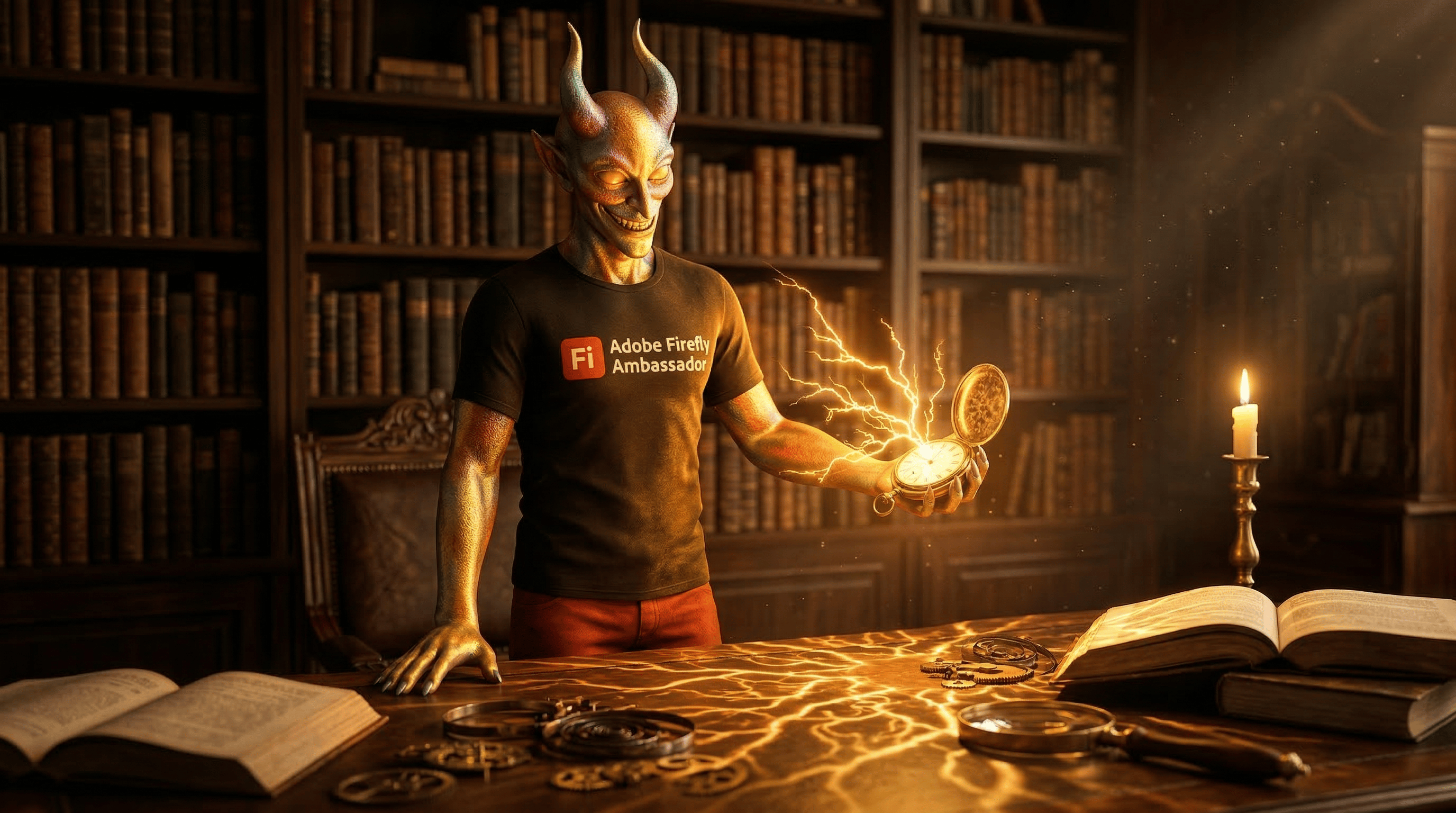

9.23. The session's best single image across all 176 scored. Clockwork visible beneath the storm. Lightning casting amber patterns across the watch mechanism. A model that placed 6th on a lightbulb produced the image of the entire session on a pocket watch.

What NB Pro did with the pocket watch combined every behavioral signature that the lightbulb had constrained. The clockwork mechanism beneath the storm gave the lightning somewhere to go. Escaped light became escaped light across gears and springs, not just a flat table surface. The study and library environments that NB Pro defaults to felt natural with an antique pocket watch in a way they never did with a modern lightbulb. The warm caustic patterns that were fighting against the lightbulb's stock-photography-clean aesthetic became part of the pocket watch's inherent character.

The container did not make NB Pro better. It made NB Pro more itself.

The Stock Familiarity Principle

This is where the data gets interesting. Look at how each model's container rankings tell a story about what drives their output quality.

Firefly Image 5 container ranking: Snow Globe (8.42) > Coffee Cup (8.37) > Lightbulb (8.31) > Pocket Watch (8.29) > Mason Jar (8.08)

GPT Image 1.5 container ranking: Pocket Watch (9.07) > Coffee Cup (8.95) > Snow Globe (8.88) > Mason Jar (8.77) > Lightbulb (8.75)

NB Pro container ranking: Pocket Watch (8.89) > Snow Globe (8.39) > Mason Jar (8.35) > Coffee Cup (8.33) > Lightbulb (8.26)

Firefly peaks on the snow globe, the most stock-familiar container for "miniature world inside glass." GPT peaks on the pocket watch, the least stock-familiar container. NB Pro follows GPT's pattern, not Firefly's.

The gap between models changes depending on what container you give them. On the pocket watch: GPT 9.07, NB Pro 8.89, Flux 1.1 Pro (@bfl_ml) 8.43, Firefly 8.29. On the snow globe: GPT 8.88, Flux 8.45, Firefly 8.42, NB Pro 8.39.

The pocket watch is the most polarizing container in the session. The gap between GPT and Firefly on the pocket watch is 0.78 points. On the snow globe, it is 0.46 points. Unfamiliar containers separate models that reason from physics (GPT, NB Pro) from models that match training patterns (Firefly).

Two models, same storm, same pocket watch. One rendered clockwork beneath the clouds. The other rendered a clean glass disc. The container exposed what each model actually understands.

This is not a criticism of Firefly. On the snow globe, Firefly produced clean, consistent, publication-ready results. If you need reliable output with minimal variance, Firefly on a stock-familiar container is the safest path. But if you are pushing for peak scores and willing to accept some inconsistency, the data says: give NB Pro or GPT something they have never seen before.

The Behavioral Signature Theory

NB Pro's pocket watch performance confirms something I have been tracking across sessions. Models have persistent behavioral signatures that show up regardless of prompt content.

The Nano Banana family signature across this entire session:

Escaped containment: Lightning on the lightbulb, caustic light on the pocket watch, bioluminescent glow on the coral reef, green atmospheric leak on the forest, prismatic beams on the galaxy, sand particles on the desert. Every ecosystem, every container, the NB family breaks the boundary.

Environmental context: Workshop backgrounds on the lightbulb, study and library environments on the pocket watch. The model always builds a room around the object.

Warm tonality: Amber, gold, and warm brass tones dominate. Even when the prompt specifies "dark moody background," NB Pro adds warmth.

The pocket watch worked because it aligned with all three signatures simultaneously. An antique mechanical object in a warm study, with intricate mechanisms that reward the "escaped light" behavior. The container was not neutral. It was a behavioral amplifier.

Compare that to the lightbulb, which actively fought the signature. A modern glass object on a clean surface. No room to build. No mechanism for light to interact with. The container was a behavioral suppressor.

The NB Pro signature: warm room, escaped light, environmental context. The lightbulb suppressed all three. The pocket watch amplified all three.

This has a practical application. Before choosing a container for your next prompt, ask: what does my model tend to do? Then pick a container that rewards that tendency instead of fighting it.

The Numbers Behind the Swing

Here is NB Pro's full container data, because the progression tells the story better than any summary:

Container | Avg | Best | Worst | Range |

|---|---|---|---|---|

Lightbulb (R1) | 8.26 | 8.90 | 7.38 | 1.52 |

Coffee Cup | 8.33 | 8.58 | 8.08 | 0.50 |

Mason Jar | 8.35 | 8.68 | 8.15 | 0.53 |

Snow Globe | 8.39 | 8.68 | 8.15 | 0.53 |

Pocket Watch | 8.89 | 9.23 | 8.78 | 0.45 |

The pocket watch did two things. It raised the average by 0.63 points from the lightbulb. And it cut the range from 1.52 to 0.45. The model got better and more consistent at the same time. That combination almost never happens. Usually when a model's average rises, variance increases because you are pushing into unfamiliar territory. The pocket watch pushed into unfamiliar territory and stabilized the output simultaneously.

That is the sign of a behavioral match. The container aligned so well with what NB Pro naturally does that the model stopped fighting itself.

What This Means for Your Prompts

The traditional approach to prompt engineering focuses on language. Better adjectives. More specific descriptions. Clearer instructions. That matters. But this data shows that object selection, the container you choose before you write a single descriptive word, can swing your results by nearly a full point.

Here is the framework I am using now:

Identify your model's behavioral signature. Run 4 images of the same prompt and look for patterns. Does it escape containment? Build environments? Add warmth? Stay clinical?

Choose containers that amplify those behaviors, not suppress them. If your model adds environmental context, give it an object with a natural environment (antique pocket watch in a study, mason jar in a kitchen). If your model stays clinical, give it a clinical container (lightbulb, laboratory flask).

Test the unfamiliar. The pocket watch had zero stock precedent. That forced every model to reason from first principles. Models that reason well (GPT, NB Pro) excelled. Models that match patterns (Firefly) struggled. If your model reasons well, stop giving it easy containers.

The prompt for the pocket watch is above. Try it in your model. Then try it with a container that matches what your model naturally does. The swing might surprise you.

Tomorrow

The forest snow globe. Four models. Sixteen images. Not one scored below 8.78. The god ray principle, the green-gold palette formula, and the copy-paste prompt that produced the session's highest average across any model and ecosystem combination.

Glenn is an Adobe Firefly Ambassador and AI creator documenting the craft of prompt engineering at @GlennHasABeard. He publishes The Render newsletter and creates the Stor-AI Time series adapting world folktales through AI-generated video.

This is Part 2 of a five-part series analyzing 176 scored AI images across 12 models, 5 containers, and 5 ecosystems. Part 1: "I Scored 176 AI Images. The Model Mattered Less Than I Thought."