There's a version of this newsletter where I only share the wins. The 9.85 scores, the competition entries, the prompts that cracked some new ceiling and made me think I figured something out. That version is easier to write and probably easier to read.

But it would be missing half the methodology.

I approach everything I make from a craftsman's mindset. I'm a luthier by trade. In the shop, data isn't the opposite of creativity. It's what keeps you from cutting the wrong piece twice. The same logic applies here.

Every research track I run (material transformation, impossible architecture, hybrid creatures, hidden objects, temporal portals) produces roughly as many failures as successes. I score them all the same way. Five dimensions, weighted rubric, composite out of ten. And the failures get documented just as carefully as the wins, because in AI prompting, a scored failure is data. An undocumented failure is just wasted credits.

Here's what several hundred images worth of documented failures has actually taught me.

Firefly Doesn't Know What "Eldritch" Means

One of my more expensive lessons came from an eldritch-themed testing session. I had a clear vision: portraits where human anatomy was seamlessly integrated with Lovecraftian biology, something that would photograph like high-end editorial work but carry the weight of cosmic horror. I wrote prompts using words like "eldritch," "Lovecraftian," "cosmic dread," "unseeable geometry."

The images came back looking like generic fantasy concept art with tentacles attached to torsos.

The diagnosis was straightforward once I understood the problem: Firefly Image 5 is trained on Adobe Stock photography. It doesn't know H.P. Lovecraft. It doesn't have a reference for "eldritch" as visual instruction. When I gave it literary mood words, it defaulted to whatever it statistically associated those terms with, and "tentacles + dramatic lighting" in Adobe Stock territory means fantasy character art, not photographic surrealism.

The fix wasn't finding better mood words. It was replacing the mood words entirely. "Eldritch" became "giant Pacific octopus anatomy with visible chromatophore color-changing cells." "Cosmic horror" became "barn owl facial disc seamlessly blended with human facial structure." Specific biological terminology. Named anatomical features. Material physics.

This principle shows up everywhere in the data. In hybrid creature testing, "owl features" produced a 6.60 average. "Barn owl facial disc" produced 9.70. That 3.1-point gap doesn't come from better taste. It comes from replacing a vague category word with a specific named thing that Firefly's training data actually contains. The model cannot render your aesthetic vision. It can render specific things with precision.

The model can't render your aesthetic vision. It can render specific things with precision.

The "Too Plausible" Trap

I spent three architectural testing sessions discovering a failure pattern I now call the "too plausible" problem. It took me that long because each instance seemed like an isolated disappointment.

First it was ice on a torii gate. Visually strong prompt, correct construction language, everything by the book. Score: 8.17. Uniqueness dimension: 7.0, the lowest of any well-executed prompt that session. I noted it and moved on.

Then it was hard candy on a rose window. Same story. 8.24 composite, uniqueness sitting at 7.0 again. I thought maybe I was missing something about transparency.

Then it was moss and living vines on a suspension bridge. 8.08. Uniqueness: 7.0.

Three subjects, three materials, same ceiling. The ice torii is where it clicked for me. I'd built something visually strong, technically correct, and genuinely beautiful. And it scored like something ordinary, because it was. The pattern finally read clearly: every one of these "impossible" materials had real-world architectural precedent. Ice sculpture festivals exist. Hard candy's translucency functions identically to stained glass. Meghalayan root bridges are a documented human construction practice. Firefly, trained on the documented world, knew all of this. The images scored well on execution and fell short on uniqueness because somewhere in its training data, these things existed.

The materials that broke past the ceiling shared one property: they were genuinely incompatible with their architecture's function. Red yarn knitted into a rose window (blocked the light the window is meant to transmit): 9.50. Candle wax cast into a suspension bridge (actively combusting while holding tension): 9.62. Soap bubbles forming a Gothic arch (visible thinning with the implication of imminent failure): 9.25.

The rule that emerged: if the material has a real-world analog at architectural scale, or worse, if it performs the same function as the original material, it will not score as "impossible." It will score as "slightly unusual." The gap between those two things is about 1.5 points on the composite.

Ice on a torii gate scored 8.17. Ice sculpture festivals exist. The model knew that.

Subtlety Is Almost Always a Mistake

In early hybrid creature testing, I ran a variation where I deliberately asked for subtle integration. The hypothesis was reasonable: a wolf that almost has owl features would look more photographic, more plausible, more like something a wildlife photographer might actually capture.

It scored 5.90 to 6.60 across four images. Not a floor. Worse than a floor, because at least with a total failure you know something fundamental broke. These images looked like regular wolves with some minor texture ambiguity. Was that an owl? Was it just the lighting? The hybrid commitment was so low that the images read as AI glitches rather than artistic choices.

The same failure pattern appeared in hidden object work. Early sessions used vague integration language, asking for objects to be subtly woven into a scene. The items appeared as identifiable objects sitting in the scene rather than becoming part of it. After 30+ images of the same result, the diagnosis was clear: the creative vision itself was underspecified. I knew what I wanted to achieve (true camouflage, dual-identity objects) but I hadn't committed to it in the language. Vague direction produces vague execution.

Committing to the vision meant spelling out exactly what integration should look like: "each object shares its shape with a scene element, seamlessly woven into the composition." That specific articulation of artistic intent, not a style preference but a precise description of how the hidden objects should function, changed everything. The gap between "subtly hidden" and "shares its shape with" is the gap between hoping the AI guesses your intention and telling it what you actually mean.

The Functional Truth Test

Hidden object work is where I learned the most carefully scored lesson about the difference between a clever idea and a viable one.

After establishing that dual-identity objects, hidden items that share their shape with a scene element rather than just sitting near one, produced the best puzzle quality, I started testing which objects could actually carry this off. The results sorted into a pattern I came to call the functional truth test.

The prompt language that makes dual-identity work function is specific. Through dozens of iterations I landed on this construction: "each object shares its shape with a scene element, seamlessly woven into [context]." That phrase does real work. Earlier versions used the word "outline" instead of "shape," which caused Firefly to draw visible outlines around every hidden object, defeating the puzzle entirely. Versions that removed structural language altogether caused objects to stop generating. The exact wording is load-bearing. But here's what the prompt engineering work revealed: that language only works when the shape relationship is genuinely real. You can write the most precise instruction in the world and if the object has no true visual analog in the scene, the model has nothing to execute on.

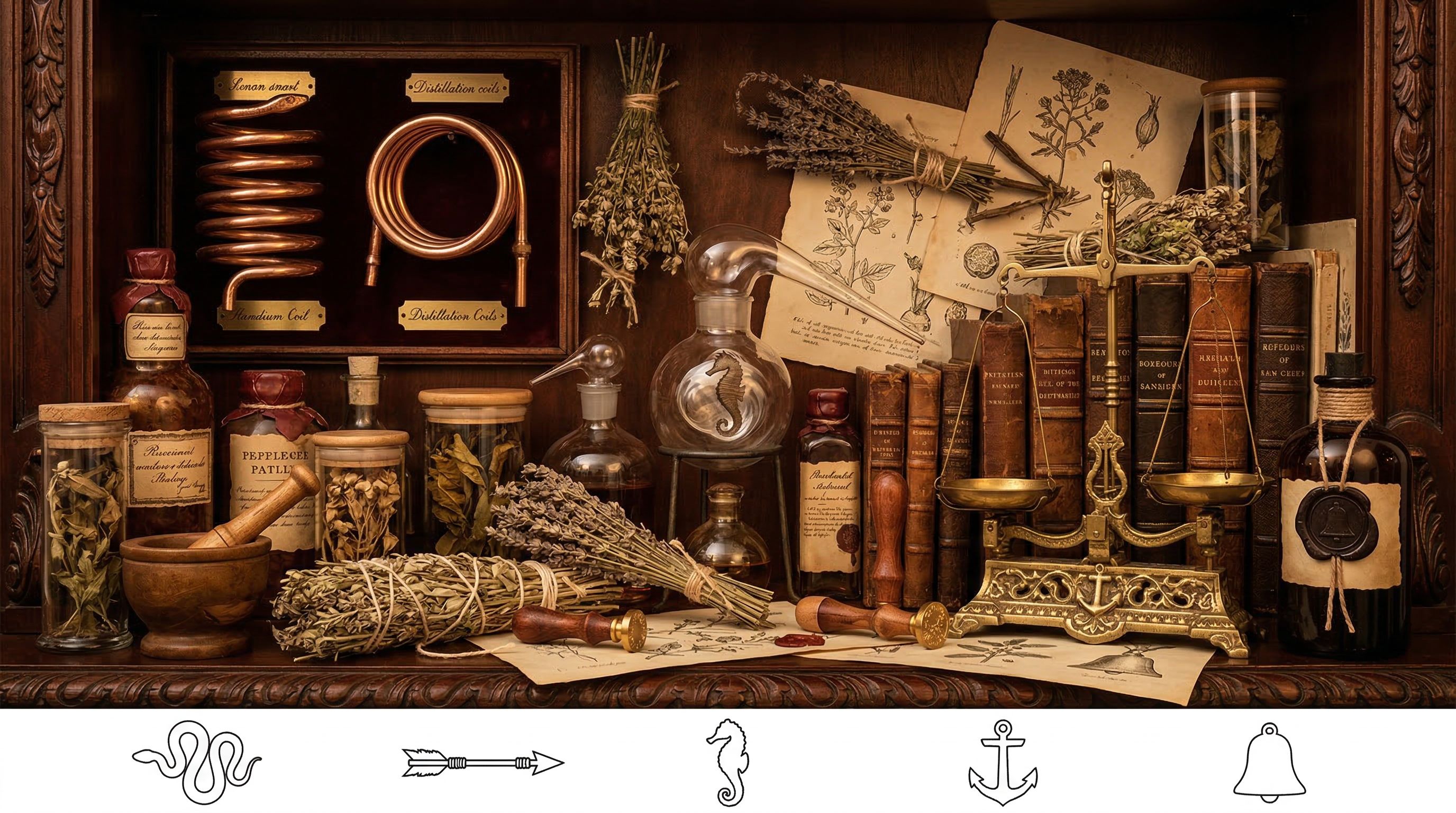

Objects that passed the functional truth test had real analogs in their scene context. An arrow became a barometer pointer needle in a Victorian cabinet, because pointer needles actually are arrow-shaped, by mechanical necessity. A seahorse became an ornate brass bracket because Victorian hardware actually was cast in seahorse forms. A snake became a copper distillation coil in an apothecary, because condensing coils genuinely are coiled tubes. These pairings worked because they weren't creative stretches. They were things that really existed in that world. The visual logic and the historical logic agreed. The prompt could say "shares its shape with a scene element" because that was already true.

Objects that failed had the same surface cleverness but no functional grounding. An anchor was proposed as an armature stand. The shape connection exists on paper. Both are roughly T-shaped. But armature stands don't look like anchors in any real workshop context. Across eight attempts, the anchor appeared as a literal nautical anchor every time. A bell was proposed upside-down as a mortar shape. Convincing on paper, zero visual truth in practice. Across twelve attempts: zero successes. The prompt language was correct. The concept had no visual truth to latch onto.

The functional truth test isn't asking whether the connection is creative. Both were genuinely clever ideas. It's asking whether the visual logic is real. If you have to explain why an object could look like something else, the camouflage has already failed. The viewer shouldn't need to know your reasoning. They should just see the scene element first and the hidden object second, without you telling them why it works.

The visual logic has to be real before the prompt can work.

When the Puzzle Solves Itself

This one is harder to classify as a failure because the resulting image was actually beautiful.

Late in nautical scene testing, I prompted for a deadeye, a nautical pulley block used in rigging, whose mounting display would share its shape with a spider. The visual logic was sound: deadeyes mounted on a display board with radiating lanyards could plausibly suggest radiating spider legs. A functional nautical display that, if you looked closely, had an unsettling arachnid quality.

Images 1, 2, and 4 delivered exactly that. The spider quality was there if you searched for it.

Image 3 built an unmistakable spider out of the deadeyes and lanyards. Central body, eight extending limbs, the whole form made explicit. Visually extraordinary, and completely useless as a puzzle. The hidden object wasn't hidden. It was the subject.

The artistic challenge of dual-identity work is that the hidden shape has to emerge, not be constructed. When the connection between item and analog is too visually strong, when the shape relationship is clear enough to be rendered rather than implied, the puzzle mechanism collapses. What was meant to be a functional nautical display became a spider portrait with nautical props.

I've since documented this as the pareidolia problem: some pairings are too legible. The fix is prompt language that keeps focus on the functional object: describe the deadeye display as period-accurate rigging equipment, in context, serving its purpose. Let the silhouette overlap emerge as a byproduct. The moment your language acknowledges the hidden shape, you're inviting it to the foreground.

Context Tells the Model What Register to Operate In

This sounds abstract until you see it in the scores, and then it's impossible to unsee.

In material transformation portrait testing, I discovered that prompts starting with lighting direction caused Firefly to render studio equipment as physical objects in the scene. The lights appeared as lamps in the background. The softboxes materialized as furniture. The model interpreted lighting-first language as scene description rather than photography instruction. Moving subject description to the front (action and pose, then material, then lighting) fixed it entirely and improved scores by roughly 25%.

The background variable produced similar data across every research track. In hybrid creature testing, white backgrounds averaged 7.20. Turquoise gradients averaged 9.58. That gap, 33%, doesn't come from turquoise being a special color. It comes from turquoise gradient backgrounds being a signal to Firefly that this is professional photography. Adobe Stock doesn't contain white-background wildlife portraits. When I provided a white background, I accidentally told the model I was doing something other than professional photography.

The same principle appeared in hidden picture work, where art styles that implied compositional organization (symmetric design traditions, rigid geometric systems, framed-scene approaches) produced worse puzzle integration than styles with organic scatter and tonal variation. Choosing an art style isn't just an aesthetic decision. It's choosing a set of compositional rules, and some compositional rules actively work against camouflage. A mandala demands symmetry. An Art Nouveau poster demands decorative borders. Neither leaves room for the visual chaos that hides things. The artistic vision has to include the hiding logic from the start.

Context words are instructions. The register they establish shapes every subsequent decision Firefly makes.

Moving subject description to the front improved scores by roughly 25%. Context words are instructions.

What This Actually Gives You

There's a practical argument for documenting failures: it stops you from running the same experiment twice. The anchor and the bell failed across eight and twelve attempts respectively. That data lives in the log. The next time either object comes up as a candidate, I know exactly what happened and why, without rebuilding the test from scratch.

But there's a more useful thing failure documentation gives you: the ability to distinguish between a concept that doesn't work and a concept you haven't figured out how to prompt yet.

The eldritch session felt like a failure at the time. "This theme doesn't translate to Firefly" would have been a reasonable conclusion. But the scored data pointed somewhere more specific: the failure was in the language, not the concept. Surreal biology absolutely translates to Firefly. It just requires species-level specificity instead of literary mood words. That distinction only becomes visible when you've documented what didn't work and why.

Most of the patterns I've identified as proven (the gradient hierarchy, the construction language requirement, the functional truth test, the material-function contradiction principle) started as documented failures that didn't match my hypotheses. The failure told me where to look next. The score told me whether I found it.

All testing conducted in Adobe Firefly using models including Firefly Image 5, Nano Banana Pro, and Nano Banana 2. Scored using a weighted five-dimension rubric: Visual Quality (30%), Prompt Alignment (25%), Consistency (15%), Uniqueness (15%), X Engagement Potential (15%). Minimum four generations per variation before drawing conclusions.