In one evening of testing miniature worlds inside glass containers, I scored 176 images across 12 models, 5 containers, and 5 ecosystems. In Part 1 I showed that container and ecosystem selection outperformed model switching. In Part 2 I showed how a pocket watch transformed NB Pro (@NanoBanana) from 6th place to the session's best single image. This is the one that surprised me most.

Four models that disagreed on nearly everything else agreed on this: the forest snow globe is the best miniature world in the session. Not by a slim margin. Unanimously. Every model ranked the forest as its top ecosystem. The cross-model average of 9.07 is the highest of any concept tested. And the individual scores tell an even cleaner story.

Model | Forest Avg | Best Single | Worst Single |

|---|---|---|---|

GPT Image 1.5 (@ChatGPTapp) | 9.41 | 9.43 | 9.35 |

Firefly Image 5 (@AdobeFirefly) | 9.10 | 9.28 | 8.73 |

Flux 1.1 Pro (@bfl_ml) | 8.90 | 9.15 | 8.78 |

NB Pro (@NanoBanana) | 8.88 | 9.10 | 8.78 |

GPT's three highest-scoring images in the entire 176-image session were all forest globes, all at 9.43. Firefly hit 9.10, its highest average across every prompt, container, and ecosystem in the session. Even the floor was high. The lowest single forest image across all 16 was Flux's 8.78. That floor beats the average score of most other concepts.

This article breaks down why. There is a formula here, and it is repeatable.

The Prompt

Here is the exact prompt used across all four models:

A glass snow globe sitting on a dark wooden table, inside the globe a complete miniature ancient forest with towering moss-covered trees and thick green canopy, golden sunlight filtering through the leaves creating volumetric god rays inside the glass, tiny ferns and mushrooms covering the forest floor, the warm light casts a green-gold glow through the glass onto the table surface, macro photography, 85mm lens, shallow depth of field, dark moody background, atmospheric haze, professional product photographyNothing exotic. No obscure technique language. No model-specific optimization. The same prompt structure used for every ecosystem in Round 3. The difference is entirely in what the prompt describes, not how it describes it.

Why Forest Won: The God Ray Principle

The session tested five ecosystems in the same snow globe: thunderstorm, coral reef, forest, galaxy, and desert sandstorm. Every one outscored the thunderstorm. But the forest did not just win. It separated from the pack.

Ecosystem | Cross-Model Avg | Gap From Forest |

|---|---|---|

Forest | 9.07 | -- |

Coral Reef | 8.79 | -0.28 |

Galaxy | 8.75 | -0.32 |

Desert Sandstorm | 8.73 | -0.34 |

Thunderstorm | 8.54 | -0.53 |

The principle that explains the ranking is not complexity or color or drama. It is whether the ecosystem creates visible light behavior inside the glass.

God rays scatter volumetrically. You can see individual beams of golden light passing between leaves, hitting the curved glass, and refracting onto the table. Bioluminescent jellyfish glow from within. Galaxies emit starlight. Sandstorms radiate amber through dust. All of these make the glass glow, and all of them outscored the thunderstorm, where lightning is momentary and monochrome.

But god rays do something the others do not. They create continuous, directional, warm light that interacts with every surface it touches. The light hits moss and turns green. It hits the glass curve and bends. It hits the dark wooden table and casts an emerald-gold pattern. Every element in the scene participates in the lighting equation. No other ecosystem creates that many simultaneous light interactions.

9.43. The highest score in the session. God rays filtering through moss-covered trees, bending through curved glass, casting emerald-gold patterns onto dark wood. Every surface participates in the light.

The Five Factors

The forest snow globe formula works because five things converge simultaneously. Remove any one and the scores drop.

1. Volumetric god rays create visible light inside glass. This is the primary driver. The rendering engine has to calculate light scattering through particulate atmosphere (haze between trees), then through a curved glass surface, then onto an external surface. That chain of interactions produces complex, beautiful results because the math is doing real work. Ecosystems with simpler lighting (thunderstorm's momentary flash, desert's diffused amber glow) give the engine less to work with.

2. The green-gold palette creates natural warm-cool contrast. Golden sunlight filtering through green canopy produces a two-tone palette that contrasts against dark backgrounds without any additional effort. Warm gold and cool emerald are complementary. The desert sandstorm also has a warm palette but it is monochromatic amber. The galaxy has cool tones but lacks the warm anchor. The forest gets both in a single light source.

Green canopy, golden light, dark wood. Two complementary tones from a single light source. No other ecosystem in the session produced this contrast naturally.

3. Trees pressing against curved glass create compression tension. This is a compositional principle. Ancient trees with thick trunks and spreading canopies fill the sphere edge to edge. Branches press against the inside of the glass. Roots curl along the bottom. The contained world feels too large for its container, which creates visual tension that reads as dramatic at any viewing size. The thunderstorm lacks this because clouds and lightning are formless. The coral reef has some of it with coral structures, but trees are more structurally rigid and press harder against boundaries.

4. Organic detail rewards high-resolution rendering. Moss textures on bark. Individual fern fronds. Red-capped mushroom clusters on the forest floor. Condensation droplets on the glass exterior. These micro-details are where high-resolution rendering shows its value. Every model produced more fine detail on the forest than on any other ecosystem because the prompt gave them more surfaces to resolve. The galaxy, by contrast, is mostly smooth gradients and point-source stars. Less surface detail means less opportunity for the rendering to impress.

5. The concept maps to terrarium photography without being oversaturated. "Lightbulb with weather inside" has stock photography precedent that caps uniqueness scores. "Snow globe with forest inside" maps to terrarium and botanical photography, which exists in training data but is not a saturated genre. The models have enough reference to understand the concept without defaulting to a template. This is the sweet spot: familiar enough to render well, unfamiliar enough to produce something that feels new.

Moss on bark. Fern fronds catching light. Mushrooms on the forest floor. The forest gives the rendering engine more surfaces to resolve than any other ecosystem in the session.

Four Models, Four Interpretations

The forest was unanimous as the top ecosystem, but each model interpreted it differently. The differences reveal what each model prioritizes.

GPT Image 1.5 (9.41 avg): Physics-first. Ancient trees with realistic bark texture. Volumetric god rays with accurate light scatter. Red-capped mushrooms. Emerald glow bleeding onto the table surface. GPT rendered the forest as a nature documentary would film it, then trapped it in glass. Consistency was 9.50. Three of four images scored 9.43.

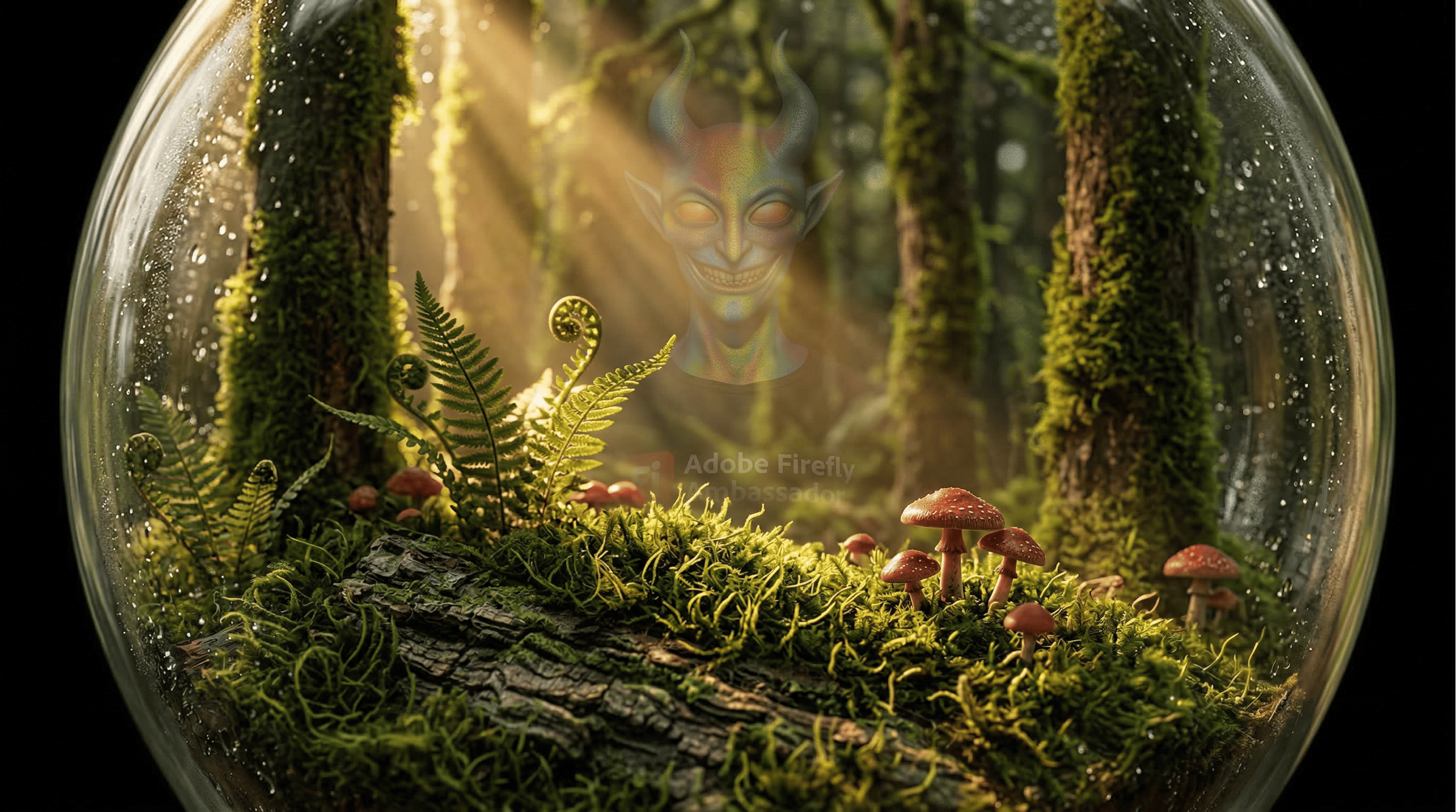

Firefly Image 5 (9.10 avg): Stock-beautiful. God rays through canopy, mushrooms on the forest floor, enchanted green glow on the table. Clean, publication-ready, no surprises. This was Firefly's all-time high in the session, and the gap between Firefly and GPT narrowed from 0.46 on the thunderstorm to 0.31 on the forest. Rich organic ecosystems play directly to Firefly's training data strengths.

Flux 1.1 Pro (8.90 avg): Fairy-tale narrative. Hollow trees with winding paths. An escaped leaf resting on the table outside the globe. Red mushrooms clustered at the base. Raw wood replacing the polished stand. Flux did not just render a forest. It told a story about one. The 9.15 peak image was Flux's best in the entire session.

NB Pro (8.88 avg): Environmental integration. Study and library setting with books and a candle beside the globe. Green atmospheric leak (the NB signature) escaping from the base. The forest globe became part of a scene rather than an isolated product shot. Highest consistency among the non-GPT models at 9.00.

Same prompt. Four interpretations. GPT filmed a documentary. Firefly shot a catalog. Flux wrote a fairy tale. NB Pro built a room around it.

Firefly's Breakthrough

One finding worth pulling out separately: the forest is where Firefly performed closest to GPT in the entire session.

Ecosystem | GPT | Firefly | Gap |

|---|---|---|---|

Thunderstorm (R2) | 8.88 | 8.42 | 0.46 |

Desert | 9.03 | 8.81 | 0.22 |

Coral Reef | 9.18 | 8.83 | 0.35 |

Galaxy | 9.07 | 8.61 | 0.46 |

Forest | 9.41 | 9.10 | 0.31 |

The desert has a narrower gap (0.22), but Firefly's absolute score is lower there (8.81 vs. 9.10). The forest combines Firefly's smallest gap with its highest absolute score. If you are using Firefly and want the best possible result from this session's concepts, the forest snow globe is your prompt.

Firefly's 9.10 on the forest also outscores GPT's thunderstorm average (8.88). The worst model on the best ecosystem beats the best model on the worst ecosystem. That is the Article 1 finding in its sharpest form.

Firefly's forest at 9.10 outscores GPT's thunderstorm at 8.88. The ecosystem you choose matters more than the model you use.

The Copy-Paste Version

If you want to try this yourself, here is the full prompt again along with the three other top-scoring ecosystem prompts from the session for comparison:

Forest Snow Globe (session champion, 9.07 cross-model avg):

A glass snow globe sitting on a dark wooden table, inside the globe a complete miniature ancient forest with towering moss-covered trees and thick green canopy, golden sunlight filtering through the leaves creating volumetric god rays inside the glass, tiny ferns and mushrooms covering the forest floor, the warm light casts a green-gold glow through the glass onto the table surface, macro photography, 85mm lens, shallow depth of field, dark moody background, atmospheric haze, professional product photographyCoral Reef Snow Globe (8.79 cross-model avg):

A glass snow globe sitting on a dark wooden table, inside the globe a complete miniature coral reef ecosystem with colorful coral formations and tiny tropical fish swimming through crystal clear turquoise water, bioluminescent jellyfish providing soft glowing light from within, light refracting through the water and glass onto the table surface, macro photography, 85mm lens, shallow depth of field, dark moody background, atmospheric haze, professional product photographyGalaxy Snow Globe (8.75 cross-model avg):

A glass snow globe sitting on a dark wooden table, inside the globe a complete miniature galaxy with swirling purple and blue nebula clouds and thousands of tiny bright stars, a spiral arm of the galaxy rotating through the center of the sphere, the starlight and nebula glow cast colorful light through the glass onto the table surface, macro photography, 85mm lens, shallow depth of field, dark moody background, atmospheric haze, professional product photographyDesert Sandstorm Snow Globe (8.73 cross-model avg):

A glass snow globe sitting on a dark wooden table, inside the globe a complete miniature desert landscape with sand dunes and a raging sandstorm with swirling amber dust and tiny lightning bolts within the dust clouds, the warm amber light from the storm casts golden light through the glass onto the table surface, macro photography, 85mm lens, shallow depth of field, dark moody background, atmospheric haze, professional product photographyTest them in your model. Pay attention to how the light behaves inside the glass. That is where the scores separate.

Next in the Series

Why "premium" AI models keep scoring worse. Flux 1.1 Pro scored 8.50. The Ultra variant scored 7.83. Ultra Raw scored 7.46. The same regression pattern I found in video generation testing, now confirmed in images. The data that says "upgrade" does not always mean "better."

Glenn is an Adobe Firefly Ambassador and AI creator documenting the craft of prompt engineering at @GlennHasABeard. He publishes The Render newsletter and creates the Stor-AI Time series adapting world folktales through AI-generated video.

This is Part 3 of a five-part series analyzing 176 scored AI images across 12 models, 5 containers, and 5 ecosystems. Part 1: "I Scored 176 AI Images. The Model Mattered Less Than I Thought." Part 2: "The Container That Turned a 6th-Place Model Into #1."